Morph Compact

Blazing Fast Compaction

33,000 tok/s on a custom inference engine. Shrink context 50-70% while keeping every surviving sentence verbatim. Your agents run for hours, not minutes.

Try it out

Paste context. See it shrink.

Context

Diff

Diff appears here after compaction

3 free compactions remaining

Morph Compact

Compaction that actually

improves performance

Compaction, not summarization

Summarization rewrites your context. Factory's eval scored it 3.4-3.7/5 on accuracy. Compact deletes filler and keeps every surviving sentence word-for-word.

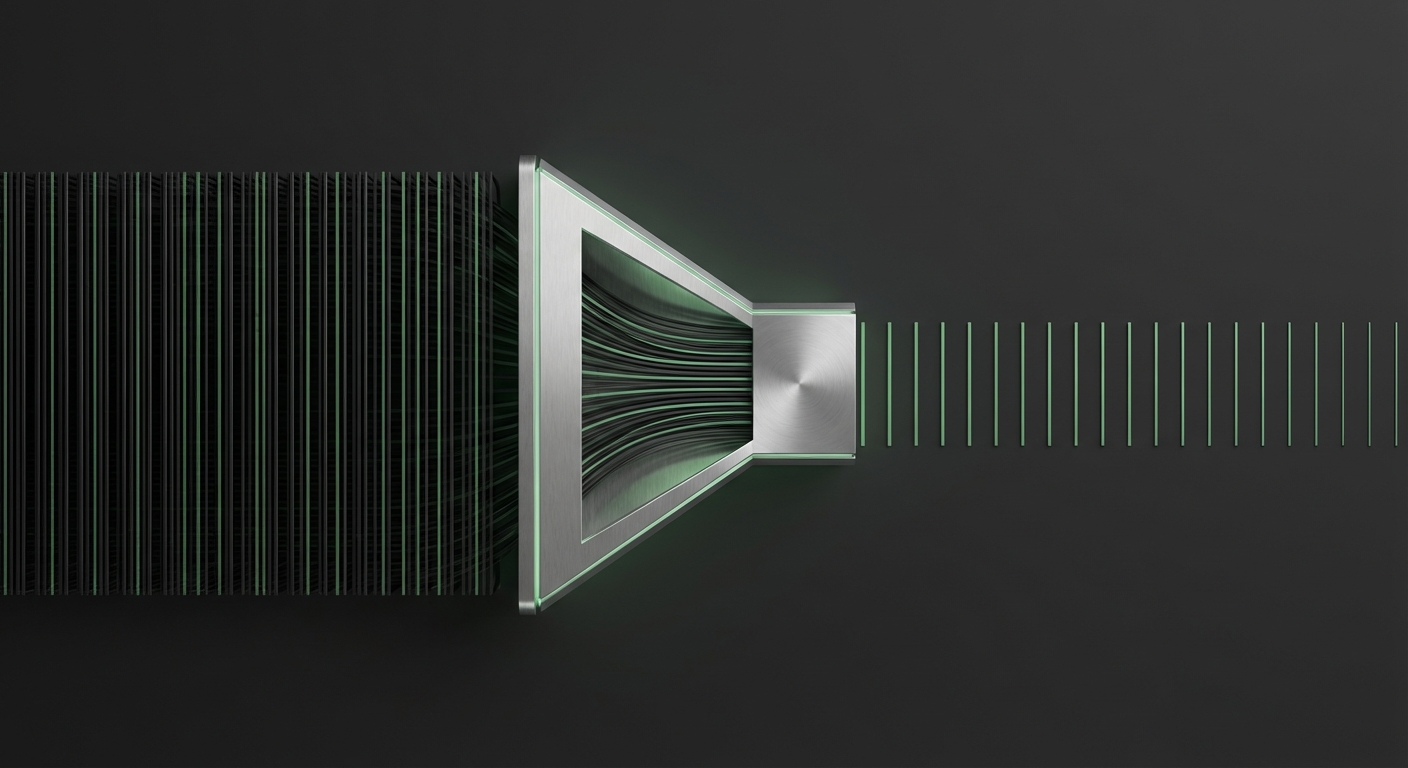

Under 3 seconds at 33,000 tok/s

Custom inference engine. Fast enough to run inline before every LLM call, not just at the 95% capacity cliff.

Works with web search

Agents running web searches pull back 10k+ tokens per page. Compact shrinks search results to the signal in under 300ms, so downstream models stay fast and don't lose the thread.

Enable 24+ hour agent sessions

1.5 min vs 2.5s compaction